Multiple Audits ≠ Multilayer Security. Stop Paying for 3 Audits

$2.54B lost despite audits. Why multilayer security, not more audits, is the only real protocol defense.

Three audits are not three layers of security. It's one layer repeated three times.

Cetus Protocol had three audits. Lost $223 million. Balancer V2 had eleven audits from four firms. Lost $125 million. Drift Protocol was audited twice this year. Lost $285 million five days ago.

Here's what none of them had: fuzzing that would have caught Cetus's overflow in seconds. AI analysis that would have flagged Balancer's rounding mismatch. An OpSec audit that would have caught Drift's zero-timelock multisig migration. Monitoring that would have detected the attack before execution, like Venus Protocol did, would have recovered every dollar and cost the attacker $3 million.

In 2025, 89 incidents caused $2.54 billion in losses. The average exploit drained $28.5 million. The protocols getting hit aren't unaudited, they confused audited with secure.

This article is about the alternative. Not more audits, different layers. And why does a single team running all of them matter more than five vendors who never talk to each other?

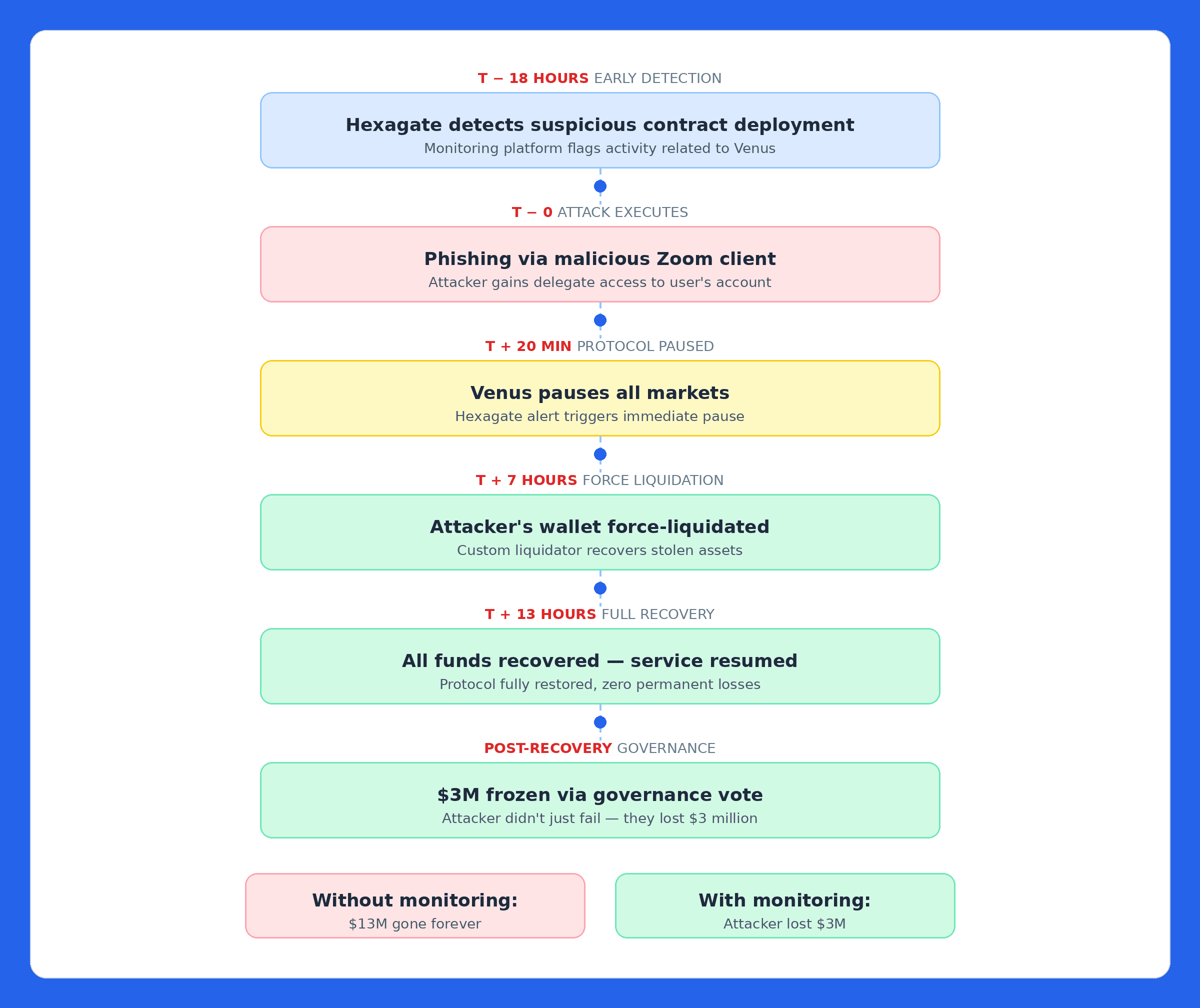

The Venus Story Should Scare You - Then Reassure You

On September 2, 2025, a Venus Protocol user was targeted by a phishing attack. The attacker used a malicious Zoom client to gain device access, then tricked the victim into signing a transaction granting delegate access. $13 million was at risk.

Eighteen hours before the attack, Hexagate's monitoring had already flagged a suspicious contract deployment. When the malicious transaction hit, Venus's team was ready. Within 20 minutes, protocol paused. Within 7 hours, attacker's wallet force-liquidated. Within 13 hours, every dollar recovered. Then the community froze $3 million of the attacker's own assets. The attacker lost money.

Without monitoring, the default is: funds drained, bridged to Ethereum, routed through Tornado Cash, post-mortem thread published. Venus rewrote that script with one layer, monitoring installed just one month prior.

The attacker didn't just fail to profit. They lost $3 million. That's what one security layer monitoring bought Venus Protocol.

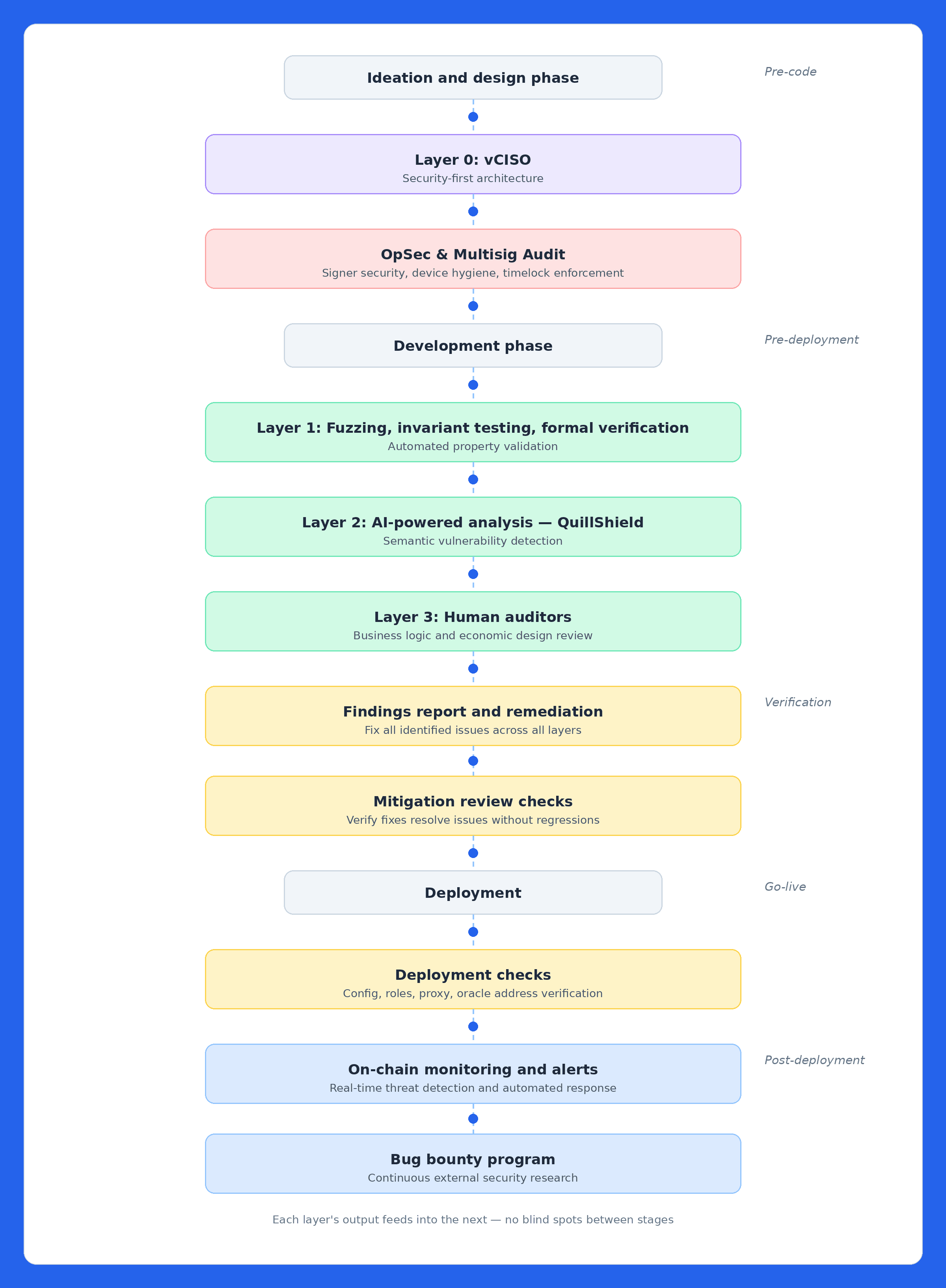

How the Multilayer Security Stack Works

Here's the complete lifecycle. Each layer exists because every other layer has a structural blind spot. The order matters, each layer's output makes the next one more effective.

The vCISO's threat model tells fuzzers what to test. Fuzzing results direct the AI engine. AI findings guide human auditors. Audit findings shape monitoring rules and bounty scope. Monitoring feeds anomalies back for ongoing assessment.

When these layers come from five different vendors who never communicate, you get the illusion of multilayer security. When they're integrated under one team, you get actual defense in depth.

Layer 0: vCISO - Kill the Bug Before Code Exists

The costliest 2025 vulnerabilities came from design decisions, not Solidity typos. Access control failures caused $1.6 billion in H1 2025, nearly 70% of all stolen funds. These are architecture bugs, key management bugs, and operational security bugs. No line-by-line review catches them because the code is doing exactly what it was designed to do, the design was just wrong.

A vCISO engagement covers the full pre-code attack surface: tokenomics design (inflation mechanics, governance manipulation vectors, treasury fund flows), architectural security (contract upgradeability patterns, trust boundaries between modules, composability assumptions with external protocols), key management (signing workflows, multisig thresholds, third-party UI dependencies), and economic risk modeling (liquidation cascading, oracle dependency mapping, market condition stress tests). The goal is to catch every class of flaw that becomes unfixable once code is written.

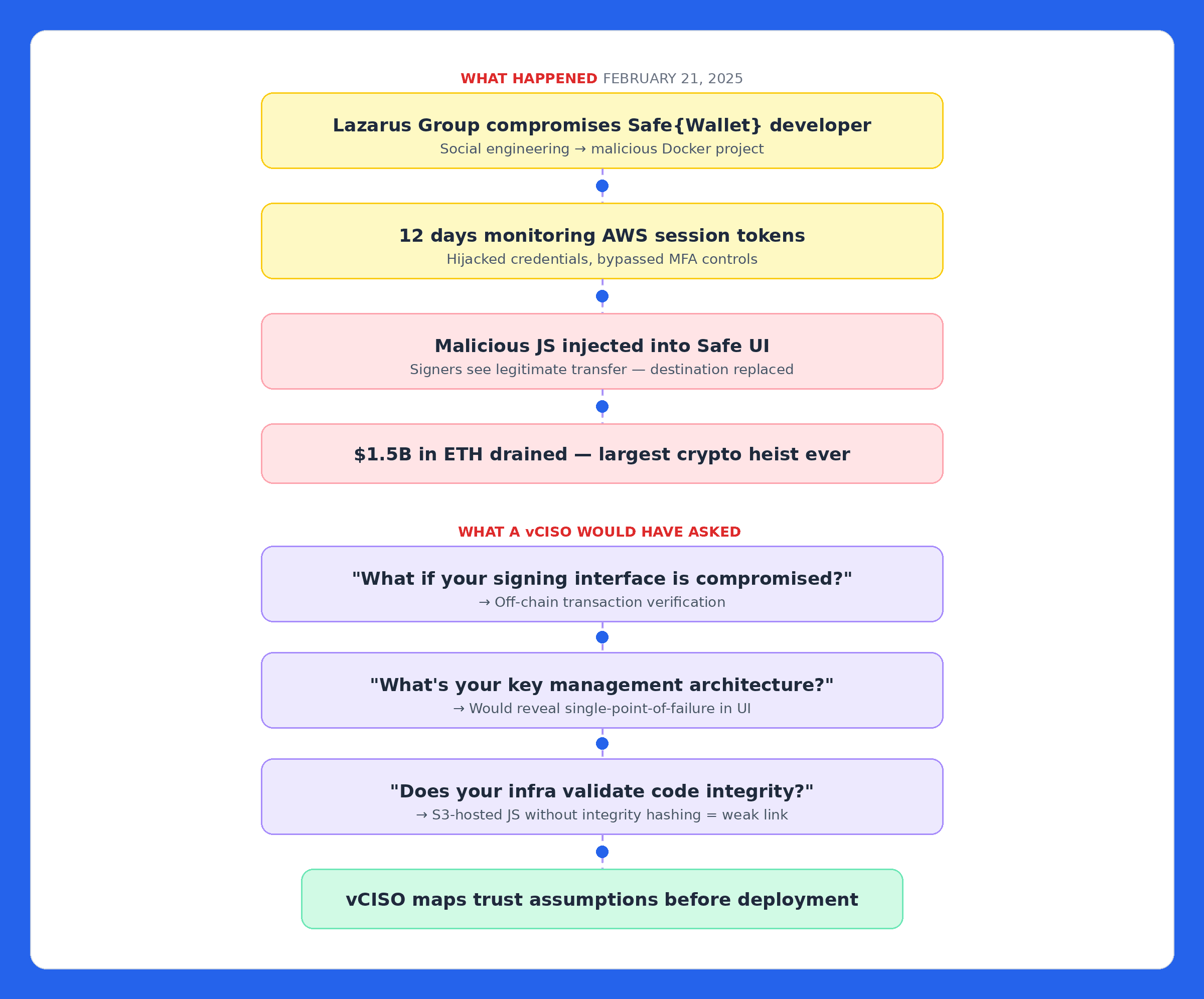

The Proof: How This Would Have Changed Bybit

Bybit's $1.5 billion breach, the largest crypto heist ever, wasn't a smart contract exploit. Lazarus Group compromised a Safe{Wallet} developer's machine, spent 12 days monitoring AWS session tokens, then injected malicious JavaScript into the Safe UI. Signers saw a legitimate transaction; the destination was silently replaced.

A vCISO would have asked: What if your signing interface is compromised? That question leads to off-chain transaction verification. What's your key management architecture? That reveals the single-point-of-failure reliance on one third-party UI. Does your signing infrastructure validate code integrity? Safe{Wallet}'s JS was served without Subresource Integrity hashing or modification alerts.

The Bybit hack exploited trust assumptions. A vCISO maps those before the first contract is deployed.

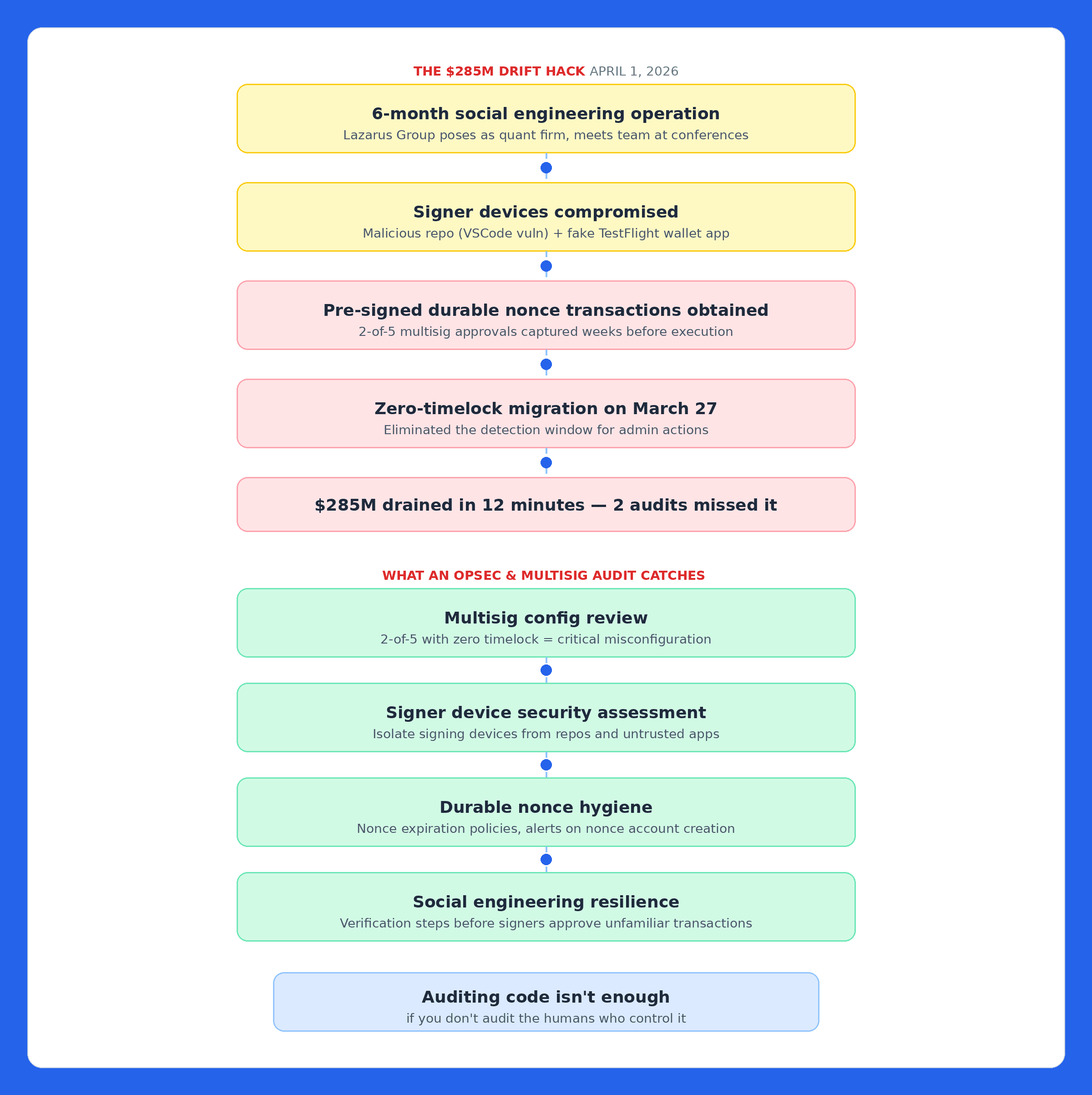

OpSec & Multisig Audit - Because the Smartest Exploits Never Touch Your Code

On April 1, 2026, Drift Protocol lost $285 million in 12 minutes, not to a code bug, but to a six-month social engineering operation.

The most expensive bugs aren't in your Solidity. They're in your signing ceremony.

The Proof: The $285M Drift Hack

Lazarus operatives posed as a quant trading firm, met Drift contributors at conferences over six months, deposited $1M+ into an Ecosystem Vault, and built a functioning ecosystem presence. Then one contributor cloned their code repo (exploiting a VSCode/Cursor vulnerability), another downloaded their TestFlight app, both giving device access to Drift's multisig signers.

The attackers induced two of five Security Council members into pre-signing transactions via Solana's durable nonce feature. On March 27, Drift migrated to a 2-of-5 threshold with zero timelock. On April 1, the attacker activated those transactions, took protocol control, listed a fake token as collateral, and drained $285M in under a minute. Two audit firms gave passing grades. Neither caught it, it wasn't in the code.

What an OpSec Audit Would Have Caught

- Multisig configuration - 2-of-5 with zero timelock is a critical misconfiguration

- Signer device security - evaluating what software runs on machines that touch the multisig

- Durable nonce hygiene - requiring expiration policies and alerts on nonce account creation

- Social engineering resilience - modeling the exact threat of attackers with legitimate relationships

Layer 1: Fuzzing, Invariant Testing, and Formal Verification - Machines First, Humans Second

Once code exists, machines should stress-test it before humans review it. Fuzzing throws millions of randomized inputs at contracts. Invariant testing defines rules that must always hold and tries to break them. Formal verification uses mathematical proofs to guarantee properties for all possible inputs.

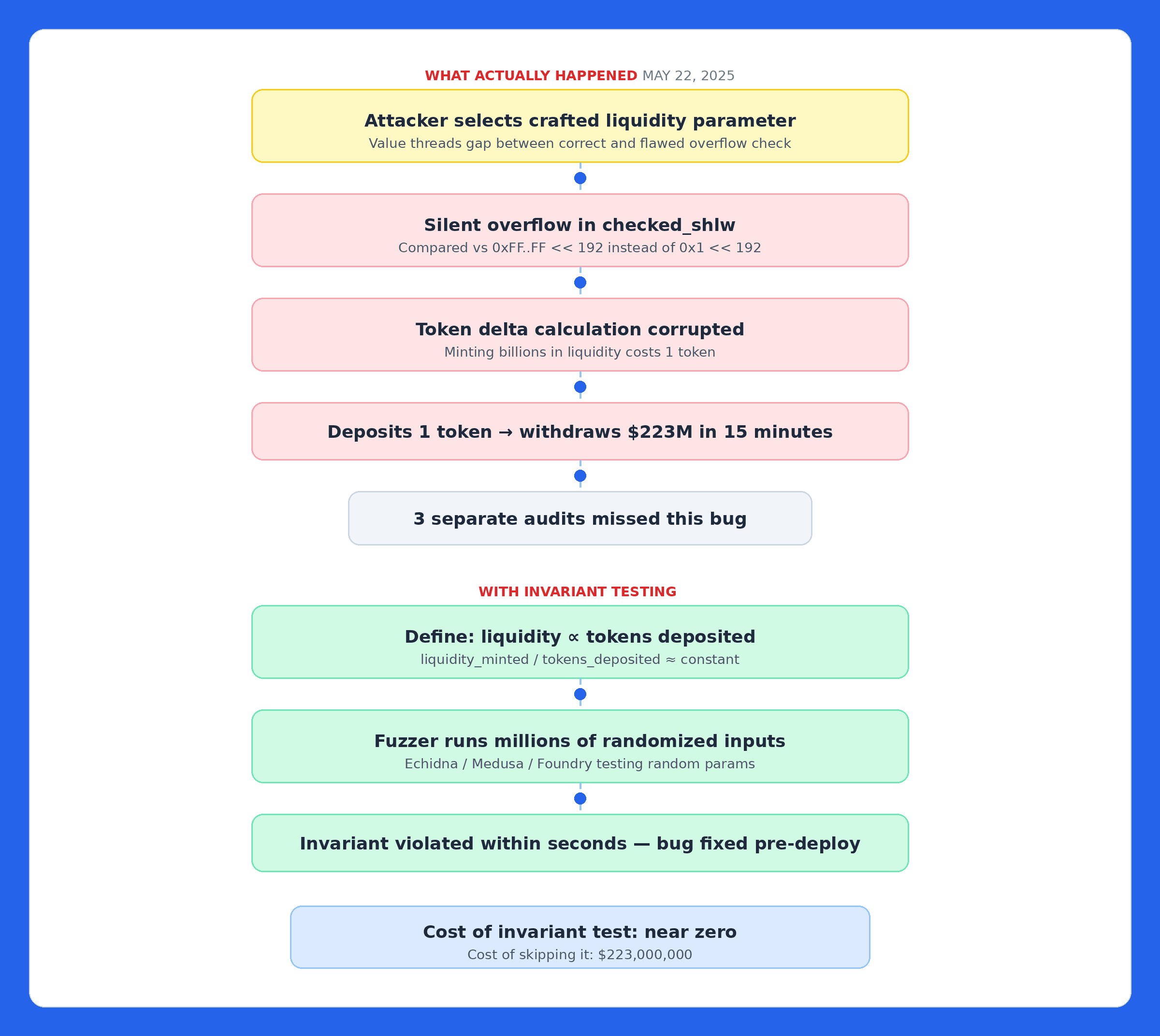

The Proof: The $223M Cetus Hack

Cetus's checked_shlw function was supposed to detect overflow when shifting a 256-bit value. The check compared against 0xFFFFFFFFFFFFFFFF << 192 instead of 1 << 192 a mask too large by a factor of 2^64 - 1. The attacker found a parameter that threaded this gap: the overflow made the protocol think minting billions in liquidity required 1 token. Deposited 1 token, withdrew $223 million. Three audits missed it.

One invariant test would have caught it:

1invariant: liquidity_minted / tokens_deposited ≈ constant (within tolerance)

2A fuzzer would have found a counterexample in seconds. Cost of the test: near zero. Cost of skipping it: $223 million.

Layer 2: AI-Powered Security - Cover the 70% Humans Never Reach

Fuzzers test properties you define. AI identifies patterns you didn't think to look for. Human audits cover 2–4 weeks, auditors must triage and skip lower-priority paths. Attackers don't skip anything.

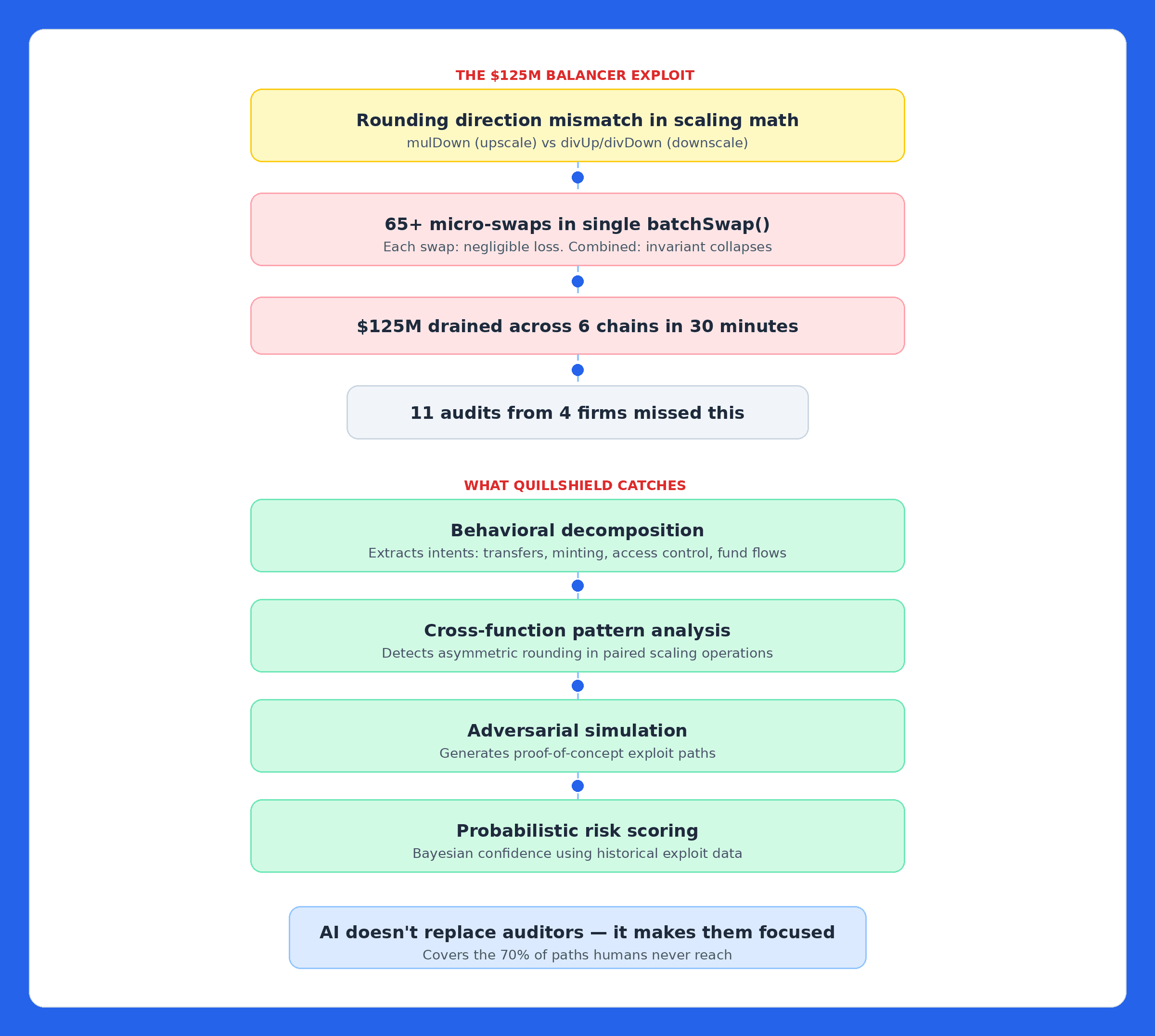

The Proof: The $125M Balancer V2 Exploit - Missed by 11 Audits

The attacker exploited a rounding direction mismatch: upscaling was used mulDown, downscaling used divUp/divDown. Each swap produced negligible precision loss. But 65+ micro-swaps inside a single batchSwap() compounded those truncations, deflated the pool invariant, and drained $125 million across six chains in 30 minutes.

An AI engine examining _upscale and _downscale together, not in isolation, would detect asymmetric rounding that doesn't preserve the invariant under adversarial sequences. This is exactly the cross-functional behavioral pattern AI catches.

AI doesn't replace human auditors. It covers the execution paths they can't reach in a fixed window and surfaces candidate issues for deeper investigation.

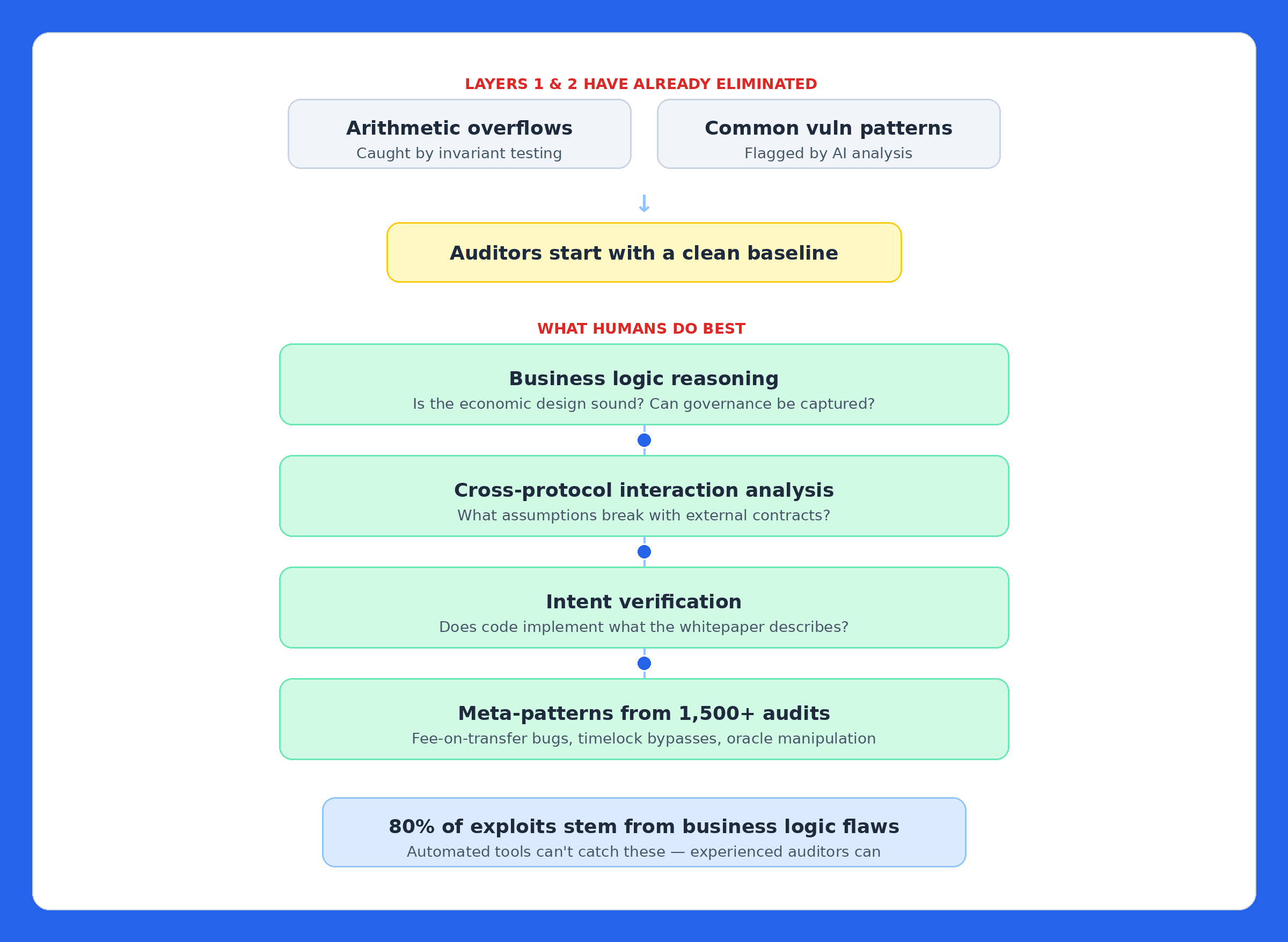

Layer 3: Human Auditors - Contextual Judgment That Machines Can't Replicate

Machines can tell you a function behaves unexpectedly. They can't tell you a governance mechanism creates perverse incentives, a token's economics will collapse under specific conditions, or composability assumptions break with a specific external contract.

By this point, Layers 1 and 2 have eliminated entire bug classes. Auditors focus on what they do best: business logic, economic design, governance edge cases, and protocol-specific intent. Roughly 80% of exploited vulnerabilities are business logic flaws, and auditors who start with a clean automated baseline can dedicate full bandwidth to finding them.

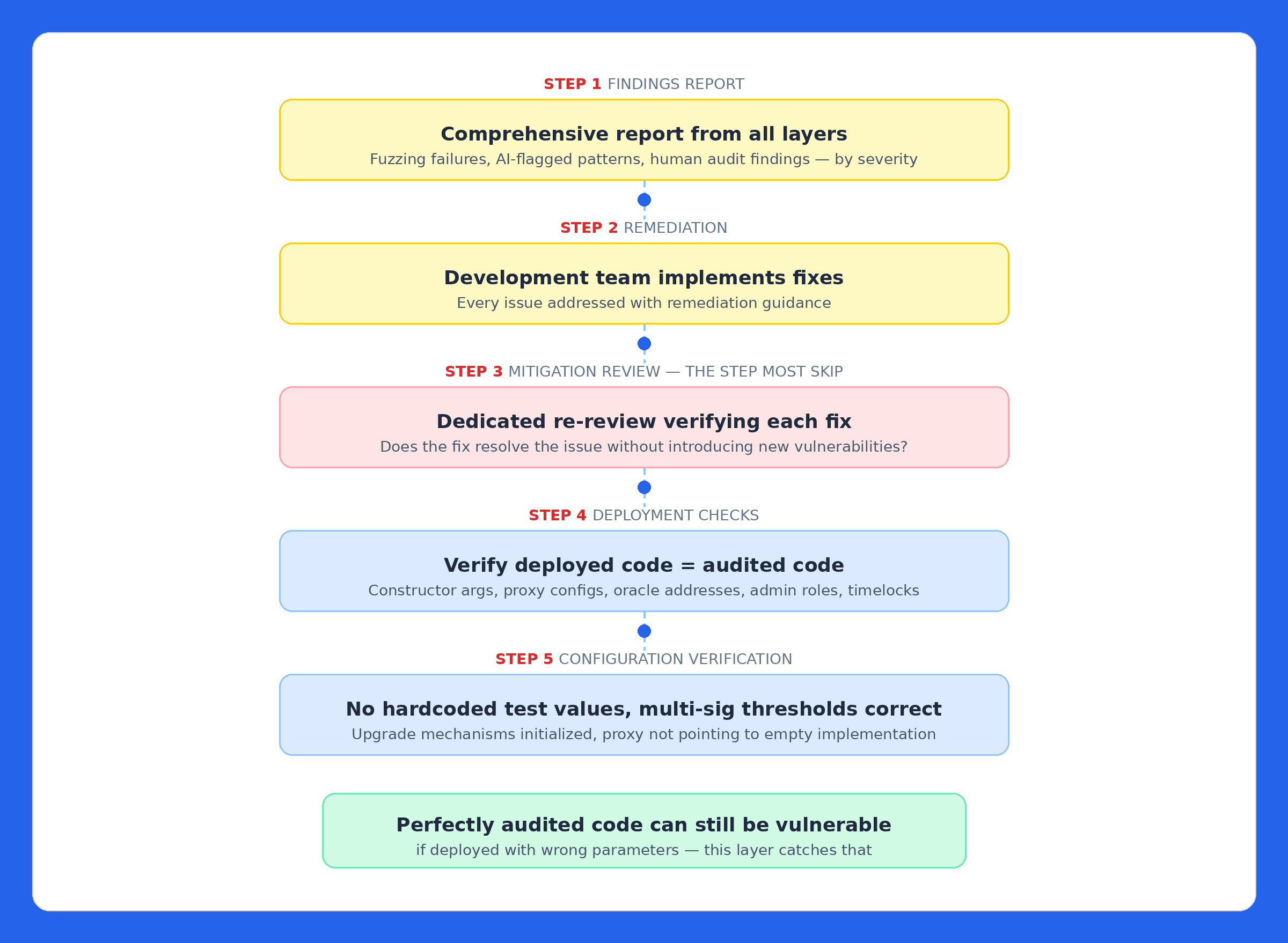

The Critical Handoff: Remediation, Mitigation Review, and Deployment Checks

The audit report isn't the end, it's the start of a remediation cycle that most protocols handle poorly.

Remediation & Mitigation Review - Fixes are implemented, then a dedicated re-review verifies each fix resolves the issue without introducing new vulnerabilities. Cetus's initial post-exploit patch was actually incorrect, requiring a second fix. Catching that in review vs. production is the difference between a footnote and a headline.

Deployment Checks - Final verification that deployed code matches audited code, and configuration doesn't introduce new risks: constructor arguments, proxy configs, oracle addresses, admin roles, timelock settings, multisig thresholds. A protocol can have perfectly audited code and still be vulnerable if deployed with the wrong parameters.

On-Chain Monitoring - Because Security Begins at Deployment

You already know the Venus story from the top of this article. Here's why it matters structurally:

A smart contract audit reviews code at a single point in time. It cannot account for runtime conditions, novel attack combinations that emerge post-deployment, vulnerabilities introduced through upgrades, or changes in the economic environment that make previously safe assumptions dangerous.

Effective on-chain monitoring isn't generic alerting. It models your specific business logic, economic assumptions, and invariants. It catches attacks that generic monitoring tools miss: price manipulation, oracle deviations, liquidation anomalies, economic attacks targeting AMMs, lending markets, and stablecoins. When something triggers, alerts flow immediately through Slack, PagerDuty, or operations pipelines, enabling protocol pauses, automated mitigation, or fund freezes before losses compound.

The protocols that survived 2025's exploit wave weren't the ones with the biggest budgets. They were the ones that treated monitoring as a first-class security layer, not an afterthought.

Bug Bounties - Continuous External Scrutiny

Running in parallel with monitoring, bug bounties incentivize the global security research community to continuously look for vulnerabilities. They're essential, but they have structural limitations when used alone.

The attention problem: Researchers choose what to look at based on bounty size, not actual risk. A $1 million bounty attracts dozens of researchers. Critical infrastructure with a modest bounty gets ignored.

The quality problem: Open bounty platforms get flooded with low-quality, duplicate, and out-of-scope reports. Teams spend more time triaging noise than addressing real findings.

The coordination problem: Most bounty programs run independently from the audit. Researchers don't know what the auditors already found. The scope isn't informed by the threat model. Findings don't feed back into monitoring rules.

Bug bounties work best when they're coordinated with the rest of the security stack, when researchers receive context from the audit about known risk areas, when the scope is informed by the threat model, and when findings feed back into monitoring rules. A curated program with vetted researchers produces higher-quality submissions than an open free-for-all.

The Cost of Disconnected Security

Balancer had eleven audits, a bounty program, and monitoring, still lost $125M. The layers didn't communicate. Drift had two audits, but neither evaluated the multisig migration.

When one team runs the entire stack:

- vCISO's threat model informs what fuzzers test

- OpSec audit hardens the humans controlling the code

- Fuzzing results direct the AI engine

- AI findings guide human auditors

- Audit findings shape monitoring rules and bounty scope

- Monitoring feeds anomalies back for ongoing assessment

Five vendors who never speak cause gaps at every seam. Those seams are exactly where attackers live.

What This Actually Costs

Founders reading this are doing mental math and assuming this is out of reach. Let's make the math explicit.

The average DeFi exploit in 2025 drained $28.5 million. The median is lower, but the tail risk is what kills protocols, Cetus lost $223M, Balancer lost $125M, Drift lost $285M, and those were protocols that had audits.

A protocol with 2,000 - 5,000 lines of Solidity heading to mainnet in 8 weeks can get the full multilayer engagement, vCISO, OpSec audit, fuzzing, AI analysis, manual audit, remediation review, deployment checks, monitoring setup, in 6 - 10 weeks. The cost is less than 1% of a single average exploit.

Put it differently: Drift spent more on two traditional audits that missed the operational vulnerability than a full multilayer engagement would have cost. Cetus spent more on three audits that missed the overflow than on the invariant test that would have caught it. The math isn't close.

The question isn't budget. It's whether you want to explain to your users why you paid for the same layer three times instead of covering the layers that actually matter.

What Happens When You Skip a Layer

Every layer covers another layer's blind spot:

- vCISO catches design flaws - can't validate code

- OpSec Audit catches signer compromise - can't validate smart contract logic

- Fuzzing catches property violations at scale - can't reason about business logic

- AI catches patterns across all paths - can't reason about novel economic logic

- Human auditors catch business logic flaws - miss 70%+ of execution paths in fixed windows

- Bug bounties provide ongoing discovery - reactive, attention follows bounty size

- Monitoring detects attacks in real time - can't fix bad code

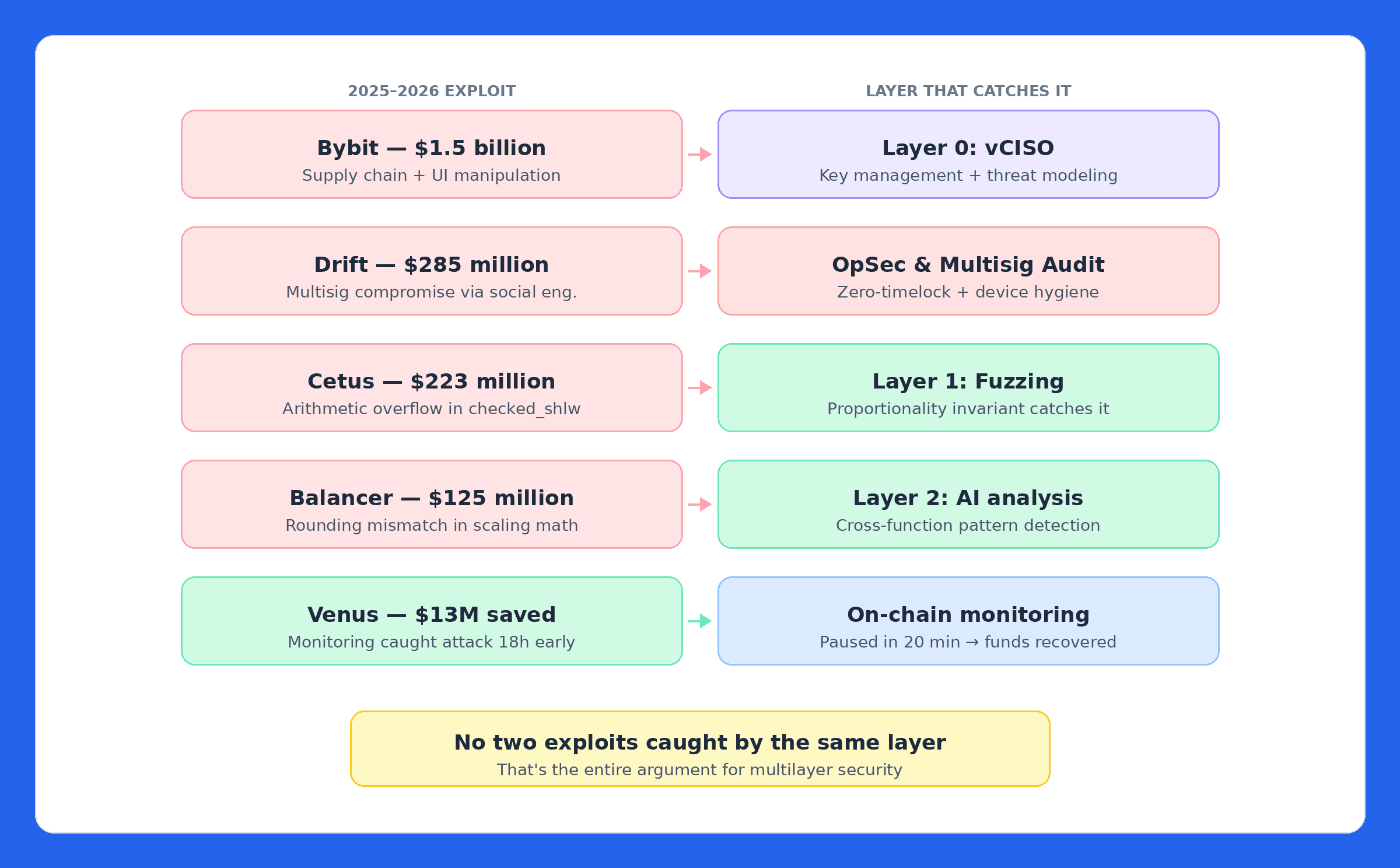

Skip any one, and you've left a gap that an attacker only needs to find once. Map this to 2025–2026's biggest exploits: no two would have been caught by the same layer.

Build This With QuillAudits

At QuillAudits, we've spent 8+ years building this stack, not as theory, but as an integrated service. 1,500+ protocol audits. $3B+ in TVL secured across Ethereum, Solana, Base, and every major EVM chain.

Our multilayer approach covers the complete security lifecycle as a single, coordinated engagement:

- vCISO - Threat modeling, architecture review, key management design from the whitepaper stage.

- OpSec & Multisig Audit - Multisig configuration, signer device hygiene, timelock enforcement, social engineering resilience.

- Fuzzing, Invariant Testing & Formal Verification - Property-based testing under millions of adversarial inputs.

- AI-Powered Analysis via QuillShield - Semantic vulnerability detection. Battle-tested on Code4rena, open-sourced for the community.

- Expert Manual Audits - Line-by-line review focused on business logic and economic design.

- Remediation & Mitigation Review - Dedicated re-review confirming fixes resolve issues without regressions.

- Deployment Checks - Pre-launch verification of deployed code and configuration.

- Bug Bounty Program Design - Curated programs coordinated with audit findings and threat models.

- On-Chain Monitoring & Alerts - Continuous, protocol-aware detection with automated response.

The integration matters as much as the layers. When one team runs the entire stack, nothing falls through the gaps between vendors.

Conclusion

Bybit. $1.5 billion. Compromised signing interface. Drift. $285 million. Compromised signer devices. Cetus. $223 million. Arithmetic overflow. Balancer. $125 million. Rounding mismatch in scaling math. Venus. $13 million attempted. Caught 18 hours early. The attacker lost money.

Five exploits. Five different root causes. Five different layers that would have caught them. No two caught by the same layer.

That's not a coincidence. That's the structure of the problem. Attackers don't limit themselves to one technique. The protocols that survived 2025 didn't limit themselves to one defense. Your protocol is either multilayer secure, or it's waiting for the exploit that fits through the gap you didn't cover.

Contents