The Admin Audit Checklist

$2B lost from Bybit, Drift, Radiant, and Resolv, zero code bugs. Learn why an Admin Audit covers the 70% your smart contract audit misses.

Dear founders, you raised your round. You shipped your protocol. You paid six figures for a smart contract audit from a top-tier firm. You got the clean report. You tweeted the badge.

And none of it would have saved you from what happened this quarter.

On April 1, 2026, Drift Protocol, Solana's largest perpetual futures DEX, $550M in TVL, audited by Trail of Bits, lost $285 million in twelve minutes. The smart contracts were flawless. They spent six months socially engineering the team, got two multisig signers to unknowingly pre-approve malicious transactions using Solana's durable nonce feature, and drained half the protocol's TVL before anyone could react.

Ten days earlier, Resolv lost $25 million because a single signing key was stored in AWS and the on-chain contract had zero validation on mint ratios. The attacker deposited $300K in USDC and walked away with $25M in ETH. The code worked perfectly. The infrastructure didn't.

Before that, Radiant Capital lost $50 million because its multisig threshold was 3-of-11, and the attacker compromised three signers with malware that displayed legitimate transactions while malicious ones were signed in the background. Bybit lost $1.4 billion, the largest crypto theft in history, through a compromised developer laptop that manipulated what Safe{Wallet} signers saw on their screens. WazirX lost $230 million through a near-identical front-end manipulation attack.

Over $160 million was drained from DeFi protocols in Q1 2026. Then on the first day of Q2, Drift lost another $285 million in a single afternoon. Across the biggest exploits of 2025–2026, Bybit, Drift, WazirX, Radiant, Resolv, every protocol had passed smart contract audits. Not a single one was a code bug.

This is the blog post I wish someone had written before any of those founders had to learn this lesson with their users' money.

I'm writing it now because the pattern is unmistakable, the biggest threat to your protocol is not buggy code. It's the governance infrastructure that sits around the code, the keys, the multisigs, the cloud environments, the humans. And the only thing that audits that layer is something most founders have never heard of, an Admin Audit.

What Is an Admin Audit?

A smart contract audit reviews your Solidity. An admin audit reviews everything else.

It's the systematic examination of the operational security layer that determines who can deploy, upgrade, pause, or drain your protocol and what safeguards exist to prevent those powers from being abused. It covers key management, multisig architecture, off-chain infrastructure, social engineering resistance, on-chain safeguards, and incident response readiness.

The concept comes from military OPSEC doctrine, a five-step cyclical process for identifying critical assets, assessing threats, analyzing vulnerabilities, evaluating risk, and deploying countermeasures. The Security Alliance (SEAL), the team behind the SEAL 911 emergency hotline, SEAL Wargames, and the SEAL Certification program, has adapted these principles into comprehensive, open-source frameworks built specifically for Web3.

Here's the simplest way I can put it:

A smart contract audit tells you if the vault door is well-built. An admin audit tells you who has the keys, where they're kept, and what happens when someone steals one.

Most founders treat security as a pre-launch checkbox, hire an audit firm, fix the high-severity findings, and publish the report. But that audit only covers the on-chain code, roughly 30% of your actual attack surface. The other 70% is the operational layer that goes completely unexamined unless you specifically commission an admin/opsec audit.

Why This Is a Founder Problem

Here's what nobody tells you when you're building, when your protocol gets exploited through an admin key compromise, the community doesn't blame the attacker. They blame you.

The post-mortem lands. Crypto Twitter dissects your multisig setup. People find out your threshold was 2-of-5. That you had no timelock. That your signing key was a single EOA in AWS. That your team member downloaded a PDF from someone they met on Telegram three months ago.

And then, Your TVL collapses, Your token crashes, Your users lose money, Lawsuits get filed, Downstream protocols bleed, Your reputation, the thing you spent years building, evaporates in a single news cycle.

This is a founder problem because the decisions that prevent these exploits are governance decisions. Multisig thresholds, timelock durations, signer selection, infrastructure architecture, key storage policies, these aren't buried in code. They're architectural choices that you, as the founder, either prioritize or don't.

The breach that costs hundreds of millions never begins with a brilliant hack. It begins with an account nobody remembered to secure, a threshold nobody thought to raise, a timelock nobody bothered to implement.

And the hardest truth, every one of those decisions is cheap and straightforward to get right. The protocols that got drained didn't lack the resources to implement timelocks or raise their multisig threshold. They lacked the awareness that these things mattered as much as their Solidity.

The SEAL Framework - How Admin Audits Work

The Security Alliance (SEAL) has built the gold standard for protocol operational security. Their framework library is open-source at frameworks.securityalliance.org and covers Operational Security, Multisig Operations, Wallet Security, Infrastructure, Incident Management, Monitoring, and even DPRK-specific threat mitigation.

SEAL's approach adapts the five-step OPSEC cycle from military doctrine into a continuous process for Web3:

The framework rests on five security fundamentals that should guide every founder's thinking:

Layered Protection - multiple overlapping controls so that if one mechanism fails, others hold. A multisig alone isn't enough. You also need timelocks, on-chain validation, real-time monitoring, and tested incident response.

Minimal Access Scopes - every key, signer, and service account should have only the permissions it needs and nothing more. One compromised credential = total protocol drain.

Information Flow Control - limit access to sensitive operational details (signer identities, key storage locations, infrastructure topology) on a need-to-know basis.

System Isolation - signing devices should be dedicated, hardened machines. Not the same laptops your team uses for Telegram, Discord, and downloading PDFs from former contractors.

Continuous Visibility - active monitoring of all privileged on-chain activity, automated anomaly alerting, and regular reassessment. Security is not a state, it's a practice.

The Founder's Admin Audit Checklist

You don’t need a six-month engagement to get started. Here’s a complete checklist organized into seven domains. For each one, there’s a key question you should be able to answer and how it could have prevented the real-world exploits we discussed.

Key & Wallet Inventory

The founder question: Can you quickly list every wallet and key that has privileged access to your protocol in under five minutes?

If the answer is no, this is where you start.

Every multisig should be documented with its purpose, operating rules, wallet address, signer list, and risk classification level. Maintain a living registry that's updated after every operational or signer change. Classify wallets by impact level and map each classification to required controls.

What this would have prevented: A complete inventory would have immediately flagged Resolv's single-EOA SERVICE_ROLE as a critical vulnerability, a privileged minting key controlled by one account with no multisig protection.

You can't audit what you can't see. If you don't know every key that can move money in your protocol, you're not running a protocol you're running a lottery.

Multisig Architecture & Governance

The founder question: If an attacker compromises one of your signers tomorrow, what can they do?

Recommendations:

- High thresholds proportional to TVL. For protocols managing significant user funds, 5-of-7 or higher. Avoid N-of-N (key loss = permanent lockout).

- Diverse signers. Mix of roles (executive, developer, operational), geographies, and timezones. At least one external signer, a security partner or trusted advisor outside your organization.

- Dedicated signing wallets. SEAL explicitly discourages signers from reusing addresses for other purposes. Dedicated wallets, monitored for non-multisig activity.

- Documented signer lifecycle. Formal processes for adding, replacing, and removing signers, including offboarding and periodic access reviews.

- Independent verification of signer changes. Any critical administrative action must be verified through multiple, independent communication channels (e.g., a video call and a signed message) to prevent social engineering.

What this would have prevented: Radiant's 3-of-11 threshold meant the attacker only needed to compromise 3 out of 11 targets. Drift's threshold was lowered to 2-of-5 without a timelock weeks before the exploit. Higher thresholds with diverse, geographically distributed signers would have made both attacks significantly harder, likely infeasible.

Your multisig threshold is the number of people an attacker needs to fool. Make that number high. Make the people hard to reach. Make them diverse enough that no single phishing campaign can hit them all.

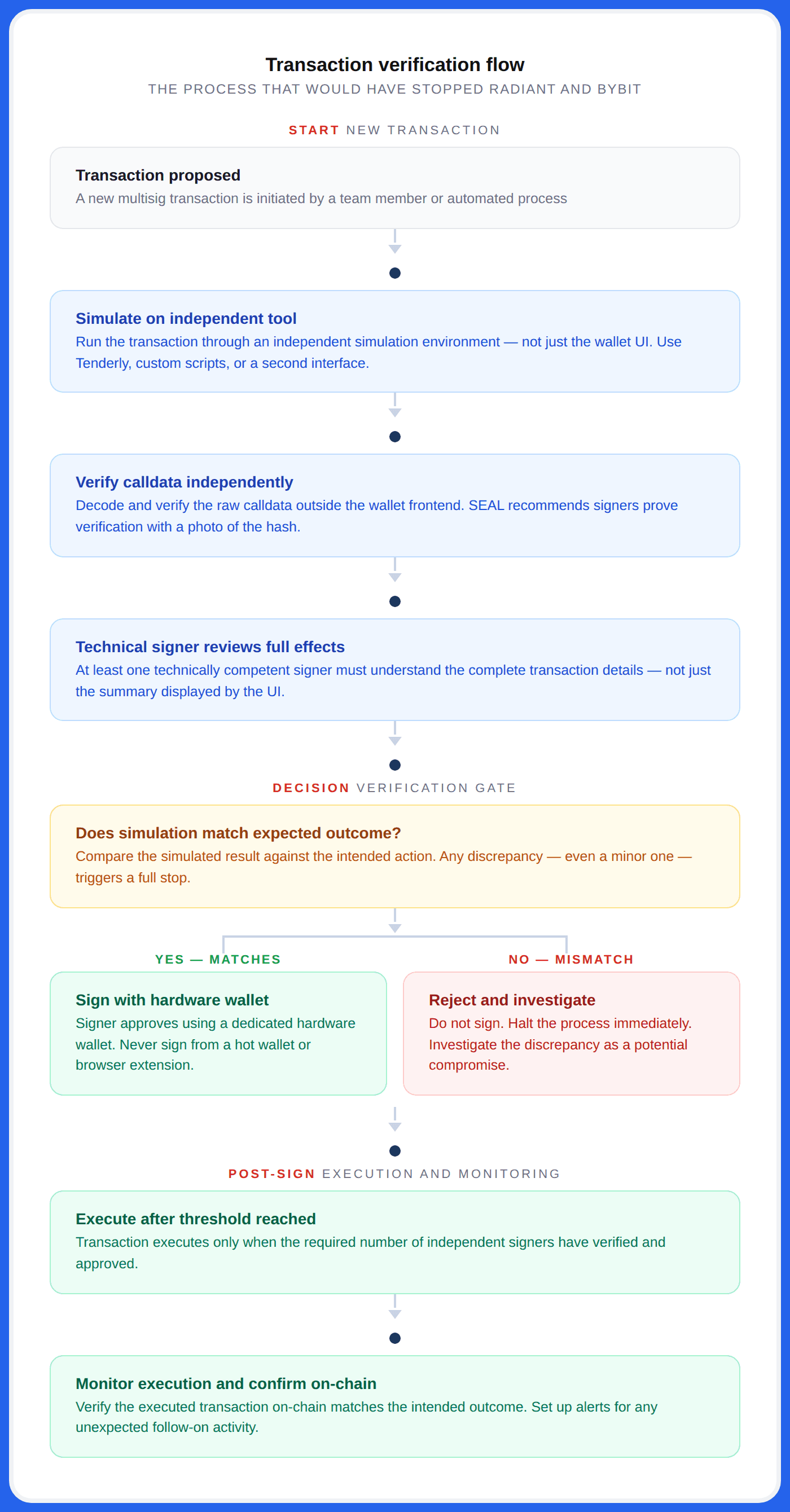

Transaction Verification & Signing Procedures

The founder question: Do your signers independently verify what they're signing, or do they trust the UI?

This is the domain where Radiant and Bybit were destroyed. Both attacks manipulated the Safe{Wallet} frontend to display legitimate-looking transactions while the actual calldata was malicious. The signers had no reason to suspect anything, the interface looked normal.

Recommendations:

- Never sign blindly. This is SEAL's foundational principle for transaction security.

- Simulate every non-trivial transaction on an independent tool before signing. Not just the wallet UI, but an independent simulation environment.

- Verify calldata independently. SEAL recommends signers prove they've verified the calldata using a photo of the hash or a similar verification artifact.

- At least one technically competent signer must review the full transaction details and effects, not just the summary displayed by the UI.

- Extra scrutiny for durable nonces (Solana) and deferred transactions. These can be pre-signed and executed days or weeks later, the exact mechanism used in the Drift exploit.

- Use diverse client software to interact with the multisig. This mitigates single-point-of-failure risks from a vulnerability in one software package.

What this would have prevented: At Radiant, the malware displayed legitimate transaction data while malicious transactions were signed in the background. Independent calldata verification, decoding the raw transaction data outside the compromised UI, would have revealed the discrepancy. At Drift, the durable nonce transactions appeared routine but contained instructions to transfer admin control. A technical signer reviewing the full calldata would have flagged the authority transfer.

The most expensive click in crypto isn't a trade. It's a multisig signer approving a transaction they didn't verify.

Timelocks & On-Chain Safeguards

The founder question: If an attacker gains admin access right now, how fast can they drain everything?

If the answer is immediately, and for most protocols, it is, you have a critical design flaw that no smart contract audit will ever catch.

Recommendations:

- Timelocks on all critical admin actions. Collateral changes, oracle updates, withdrawal limit modifications, contract upgrades, and signer changes. 24-48 hours minimum for high-impact functions.

- On-chain validation of privileged operations. Minting caps, collateral ratio sanity checks, and maximum single-transaction limits.

- Rate limiting. Even with valid admin keys, hard-cap what can be withdrawn or minted per time window.

- Emergency pause mechanisms. A guardian role (separate from the admin multisig) that can freeze the protocol if anomalous activity is detected, buying time for investigation.

- Contract-level safeguards. SEAL's multisig checklist asks: "Have you evaluated contract-level security controls that could limit the impact of a multisig compromise?"

What this would have prevented: This is the single highest-impact domain. If Drift had a 48-hour timelock on admin functions, the community would have had two full days to notice that withdrawal limits were being raised to extreme values and a brand-new illiquid token was being listed as collateral. The $285 million exploit would have been dead on arrival. If Resolv's minting contract had a simple bounds check, is this mint within 2x of the oracle price?, the entire exploit would have been blocked.

A timelock is the cheapest insurance policy in DeFi. It costs you some operational flexibility. It costs an attacker their entire exploit.

Off-Chain Infrastructure Security

The founder question: Where are your signing keys stored, and who has access to that environment?

The Resolv exploit didn't start on-chain. It started in AWS. The attacker compromised the Key Management Service (KMS) environment where the protocol's privileged signing key was stored. No smart contract audit in the world reviews your AWS IAM policies or key rotation schedules.

Checklist:

- Key storage architecture. Are admin keys on hardware wallets (Ledger, Trezor) or cloud-hosted (AWS KMS, GCP HSM)? Cloud-hosted keys introduce the cloud provider's entire attack surface as your attack surface.

- Developer device security. Are signing devices dedicated and hardened? Is endpoint detection/response (EDR) deployed? Are personal devices isolated from signing infrastructure?

- CI/CD pipeline integrity. Can a compromised deployment pipeline push a malicious upgrade? Are deployments gated behind multisig approval?

- DNS security. Is DNS protected against hijacking? SEAL has an entire separate framework for this, DNS attacks can redirect users to phishing sites or compromise protocol frontends.

- Workspace security. Credential management, insider threat controls, and immediate offboarding when team members leave.

What this would have prevented: Resolv stored their SERVICE_ROLE key, a key with unrestricted minting authority, in a single AWS KMS environment. An MPC threshold signature requirement for that role would have made the cloud compromise insufficient to execute the attack.

Your protocol is only as secure as the least-secured laptop that can sign a transaction.

Social Engineering Resistance

The founder question: If a Lazarus operative spent three months building a relationship with one of your team members, would your defenses hold?

This isn't hypothetical. Drift's post-mortem reveals the attackers began their social engineering campaign in Fall 2025, six months before the April 2026 exploit. Radiant's attackers impersonated a former contractor and sent a malicious PDF disguised as a smart contract audit report. At Bybit, the initial compromise traced back to a Docker project downloaded from a social engineering lure.

SEAL maintains a dedicated framework specifically on DPRK IT workers and social engineering tactics. These are sophisticated, long-term campaigns run by state-sponsored groups with functionally unlimited patience.

Checklist:

- Never download files from unverified sources. Use browser-based collaboration tools (Google Docs, Notion) instead of opening ZIPs, PDFs, and executables.

- Dedicated communication channels for multisig operations with documented membership controls and offboarding procedures.

- Periodic identity verification of signers, especially during sensitive operations.

- Background verification for new collaborators, contributors, and anyone requesting access to internal systems.

- DPRK-specific awareness training. Common playbooks: fake job applicants, impersonated former contractors, malicious developer tools disguised as open-source projects.

What this would have prevented: If Drift’s team had flagged and independently verified the identities of the collaborators who first reached out in Fall 2025, and avoided using their application on signing devices, the six-month social engineering campaign would have been disrupted at the outset. If Radiant's developers had a policy against downloading files from external sources, the malware would never have reached their signing devices.

The attacker who steals $285 million doesn't break down your door. They knock, introduce themselves, and spend six months earning a key.

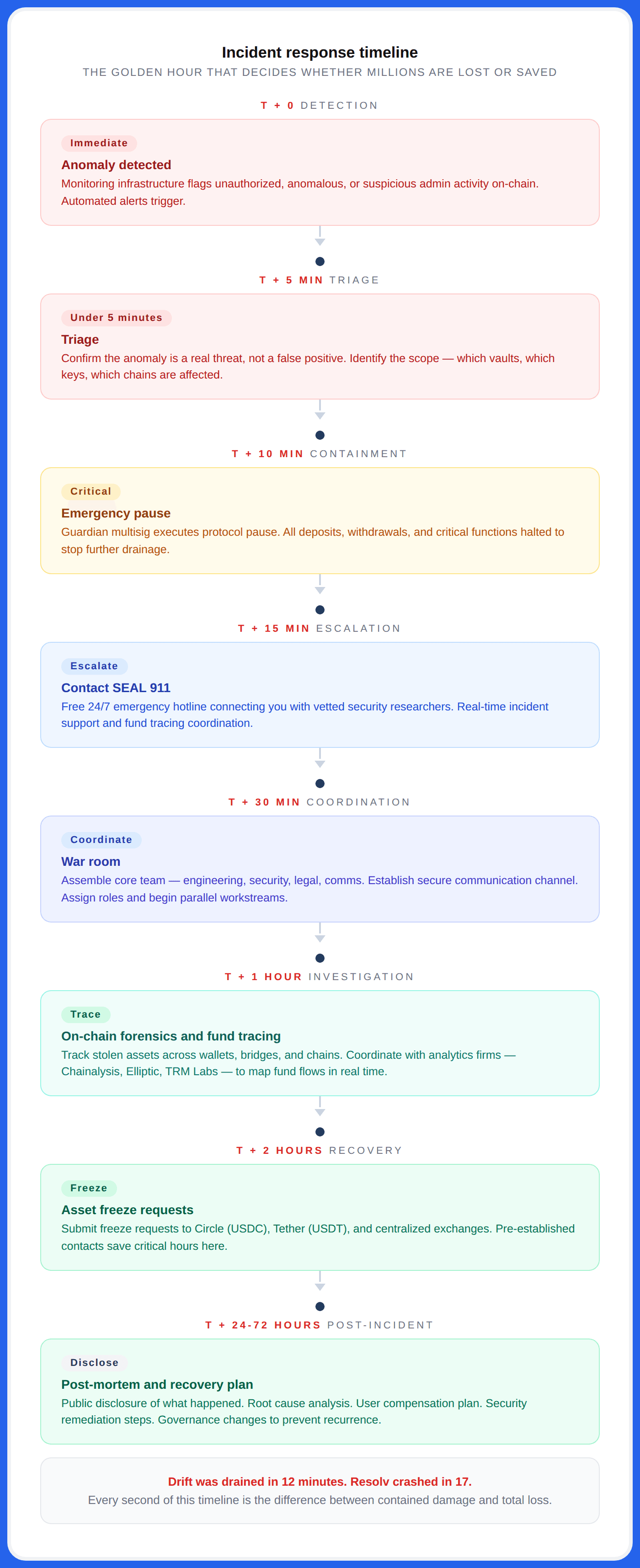

Incident Response & Emergency Operations

The founder question: If you're under active attack at 3 AM, can you reach a signing quorum and execute an emergency pause within fifteen minutes?

Drift was drained in twelve minutes. Resolv's stablecoin crashed to $0.025 in seventeen minutes. At those speeds, we'll figure it out when it happens is a plan that costs nine figures.

Checklist:

- Written incident response playbook. Who gets called, in what order, through which channels? What actions can be taken unilaterally vs. requiring a quorum?

- 24/7 reachability. SEAL recommends 24/7 paging capability for emergency-class multisigs with documented escalation paths.

- Pre-established contacts at Circle and Tether for emergency stablecoin freezes. (ZachXBT publicly criticized Circle for failing to freeze stolen USDC during the Drift exploit, despite it being bridged during U.S. business hours.)

- SEAL 911 integration. Free, 24/7 emergency hotline connecting you with vetted world-class security researchers during active incidents. This should be in every protocol's incident response plan.

- Regular drills. SEAL Wargames simulates realistic exploit scenarios, oracle manipulation, leaked private keys, so your team pressure-tests detection, coordination, and response before the real thing.

- Backup infrastructure. Documented backup signing interfaces, alternate RPC/explorers, and failover procedures, tested regularly.

- Monitoring infrastructure. Detect unauthorized, anomalous, or suspicious admin activity in real-time. Tools like QuillAudits On-Chain Monitoring can alert or automatically trigger pauses.

The difference between a $25 million loss and a $250 million loss is usually fifteen minutes of response time you didn't plan for.

The Maturity Model - Where Does Your Protocol Sit?

Not every protocol can implement everything overnight. But honestly assessing where you stand today is the critical first step. Based on the SEAL certification tiers and the patterns we've seen in real incidents:

The uncomfortable reality, most DeFi protocols today sit between Level 1 and Level 2. A handful of blue-chip protocols have reached Level 3. Almost none have reached Level 4. Level 5 is the standard the industry needs and the SEAL certification program only started issuing formal certifications this year.

Meanwhile, the attackers, particularly DPRK-affiliated groups like Lazarus are operating at a sophistication level that assumes their targets are at Level 4 or higher. They run six-month social engineering campaigns. They develop custom malware for specific wallet interfaces. They exploit obscure platform features like durable nonces.

When they find a protocol at Level 1, it's not a hack. It's a harvest.

The Comparison Every Founder Needs to See

| Smart Contract Audit | Admin / Opsec Audit | |

|---|---|---|

| What it reviews | Solidity/Vyper code logic | Keys, signers, infra, governance, procedures |

| Attack vectors covered | Reentrancy, overflows, flash loans, etc | Social engineering, key compromise, cloud breaches |

| Scope | On-chain code only | On-chain + off-chain infrastructure |

| Standard frameworks | ERC standards, SWC registry | SEAL Frameworks, NIST, military OPSEC |

| Output | Vulnerability report with severity ratings | Opsec posture assessment with control gaps |

| Frequency | Pre-deployment + major upgrades | Continuous (quarterly minimum) |

| Cost of skipping | Logic exploits | Full protocol drain ($50M – $1.4B) |

Both are necessary. Neither is sufficient alone.

You wouldn't ship a bank vault with no lock on the door. Stop shipping protocols with no audit on the keys.

The Audit Built for This Layer

We spent the last year studying every major Web3 exploit, Ronin, Radiant, Bybit, Drift, and built the service we wished every founder had access to before mainnet.

QuillAudits OPSEC & Multisig Audit is purpose-built for your attack surface that sits outside your Solidity. It's structured on the SEAL framework library and delivered by the same team that has secured $3B+ across 1,500+ smart contract audits.

The audit covers six domains: dependency layer (oracles, liquidations, bridges), infrastructure layer (frontend, DNS, CI/CD), on-chain layer (multisig architecture, thresholds, timelocks), capability layer (admin function mapping, rate limits), human layer (signer devices, social engineering resistance), and transaction layer (blind signing, hash verification, post-execution monitoring).

The engagement runs five phases, scoping and threat modeling, on-chain deep-dive, OPSEC and infrastructure assessment, red team adversarial testing, and a final report with severity-classified findings, PoCs, and a 72-hour SLA on critical issues.

QuillAudits is the only firm offering both smart contract security and operational security from a single team, because your code, keys, governance, and people are a single attack surface and need to be audited by a team that understands how all four interact.

If an attacker compromising a single key can drain your protocol, you need this audit more than you need another code review.

Conclusion

If you're a founder reading this, the message is simple, your smart contract audit is necessary, but it is not protecting you from the attacks that are actually happening in 2026.

The losses to admin key compromises, social engineering, and infrastructure breaches didn't come from buggy code. It came from governance decisions that founders either made poorly or didn't make at all. Multisig thresholds too low. Timelocks that didn't exist. Signing keys stored in cloud environments, nobody audited. Team members who downloaded PDFs from strangers.

Every one of those was a founder decision. Everyone was preventable.

The SEAL frameworks exist. The checklists exist. The certification program exists. The emergency hotline exists. The tools, the knowledge, the community, they're all there.

The question is whether you'll use them before an attacker shows you why you should have.

The code was fine. The keys weren't.

Contents